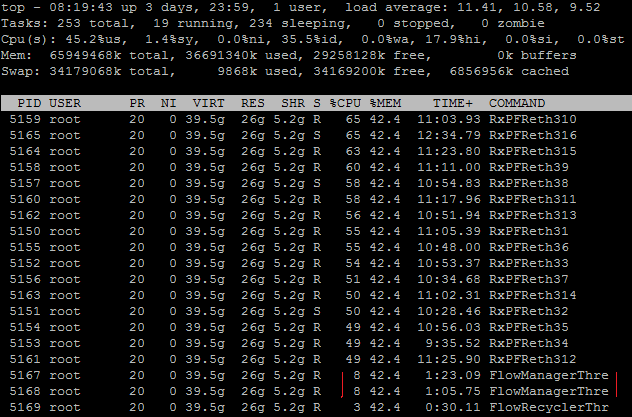

How to inspect 3-4Gbps with Suricata IDPS with 20K rules loaded on 4CPUs(2.5GHz) and 16G RAM server while having minimal drops - less than 1%

Impossible? ... definitely not...

Improbable? ...not really

Setup

3,2-4Gbps of mirrored traffic

4 X CPU - E5420 @ 2.50GHz (4 , NOT 8 with hyper-threading, just 4)

16GB RAM

Kernel Level - 3.5.0-23-generic #35~precise1-Ubuntu SMP Fri Jan 25 17:13:26 UTC 2013 x86_64 x86_64 x86_64 GNU/Linux

Network Card - 82599EB 10-Gigabit SFI/SFP+ Network Connection

with driver=ixgbe driverversion=

3.17.3

Suricata version 2.0dev (rev 896b614) - some commits above 2.0.1

20K rules - ETPro ruleset

between 400-700K pps

If you want to run Suricata on that HW with about 20 000 rules inspecting 3-4Gbps traffic with minimal drops - it is just not possible. There are not enough CPUs , not enough RAM....

Sometimes funding is tough, convincing management to buy new/more HW could be difficult for a particular endeavor/test and a number of other reasons...

So what can you do?

BPF

Suricata can utilize BPF (Berkeley Packet Filter) when running inspection. It allows to select and filter the type of traffic you would want Suricata to inspect.

There are three ways you can use BPF filter with Suricata:

suricata -c /etc/suricata/suricata.yaml -i eth0 -v

dst port 80

Under each respective runmode in suricata.yaml (afpacket,pfring,pcap) -

bpf-filter: port 80 or udp

suricata -c /etc/suricata/suricata.yaml -i eth0 -v

-F bpf.file

Inside the bpf.file you would have your BPF filter.

The examples above would filter only the traffic that has dest port 80 and would pass it too Suricata for inspection.

BPF - The tricky part

It

DOES make a difference when using BPF if you have VLANs in the mirrored traffic.

Please read here before you continue further -

http://taosecurity.blogspot.se/2008/12/bpf-for-ip-or-vlan-traffic.html

The magic

If you want to -

extract all client data , TCP SYN|FIN flags to preserve session state and server response headers

the BPF filter (thanks to Cooper Nelson (UCSD) who shared the filter on our (OISF) mailing list) would look like this:

(port 53 or 443 or 6667) or (tcp dst port 80 or (tcp src port 80 and

(tcp[tcpflags] & (tcp-syn|tcp-fin) != 0 or tcp[((tcp[12:1] & 0xf0) >>

2):4] = 0x48545450)))

That would inspect traffic on ports 53 (DNS) , 443(HTTPS), 6667 (IRC) and 80 (HTTP)

NOTE: the filter above is for

NON VLAN traffic !

Now the same filter

for VLAN present traffic would look like this below:

((ip and port 53 or 443 or 6667) or ( ip and tcp dst port 80 or (ip

and tcp src port 80 and (tcp[tcpflags] & (tcp-syn|tcp-fin) != 0 or

tcp[((tcp[12:1] & 0xf0) >> 2):4] = 0x48545450))))

or

((vlan and port 53 or 443 or 6667) or ( vlan and tcp dst port 80 or

(vlan and tcp src port 80 and (tcp[tcpflags] & (tcp-syn|tcp-fin) != 0

or tcp[((tcp[12:1] & 0xf0) >> 2):4] = 0x48545450))))

BPF - my particular case

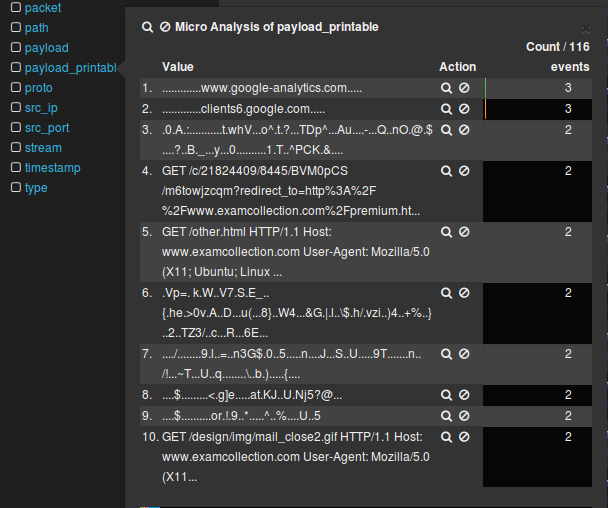

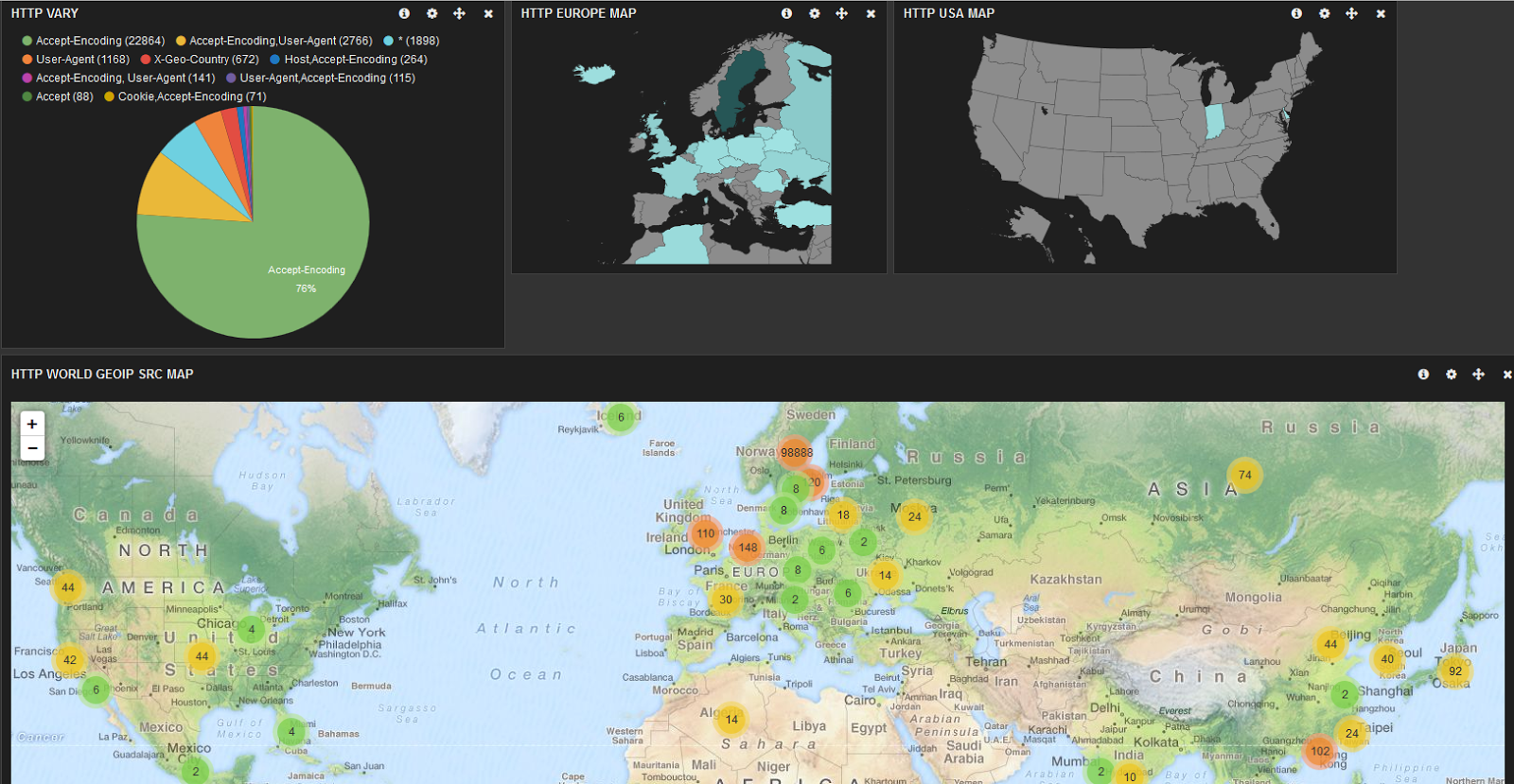

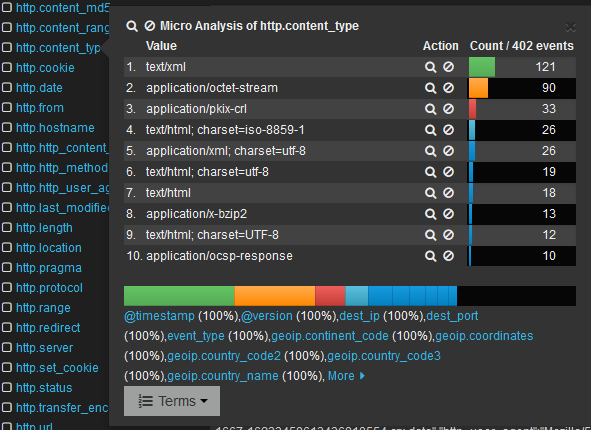

I did some traffic profiling on the sensor and it could be summed up like this (using

iptraf):

you can see that the traffic on port 53 (DNS) is just as much as the one on http. I was facing some tough choices...

The bpf filter that I made for this particular case was:

(

(ip and port 20 or 21 or 22 or 25 or 110 or 161 or 443 or 445 or 587 or 6667)

or ( ip and tcp dst port 80 or (ip and tcp src port 80 and

(tcp[tcpflags] & (tcp-syn|tcp-fin) != 0 or

tcp[((tcp[12:1] & 0xf0) >> 2):4] = 0x48545450))))

or

((vlan and port 20 or 21 or 22 or 25 or 110 or 161 or 443 or 445 or 587 or 6667)

or ( vlan and tcp dst port 80 or (vlan and tcp src port 80 and

(tcp[tcpflags] & (tcp-syn|tcp-fin) != 0 or

tcp[((tcp[12:1] & 0xf0) >> 2):4] = 0x48545450)))

)

That would filter MIXED (both VLAN and NON VLAN) traffic on ports

- 20/21 (FTP)

- 25 (SMTP)

- 80 (HTTP)

- 110 (POP3)

- 161 (SNMP)

- 443(HTTPS)

- 445 (Microsoft-DS Active Directory, Windows shares)

- 587 (MSA - SNMP)

- 6667 (IRC)

and pass it to Suricata for inspection.

I had to drop the DNS - I am not saying this is right to do, but tough times call for tough measures. I had a seriously undersized server (4 cpu 2,5 Ghz 16GB RAM) and traffic between 3-4Gbps

How it is actually done

Suricata

root@snif01:/home/pmanev# suricata --build-info

This is Suricata version 2.0dev (rev 896b614)

Features: PCAP_SET_BUFF LIBPCAP_VERSION_MAJOR=1 PF_RING AF_PACKET HAVE_PACKET_FANOUT LIBCAP_NG LIBNET1.1 HAVE_HTP_URI_NORMALIZE_HOOK HAVE_NSS HAVE_LIBJANSSON

SIMD support: SSE_4_1 SSE_3

Atomic intrisics: 1 2 4 8 16 byte(s)

64-bits, Little-endian architecture

GCC version 4.6.3, C version 199901

compiled with -fstack-protector

compiled with _FORTIFY_SOURCE=2

L1 cache line size (CLS)=64

compiled with LibHTP v0.5.11, linked against LibHTP v0.5.11

Suricata Configuration:

AF_PACKET support: yes

PF_RING support: yes

NFQueue support: no

NFLOG support: no

IPFW support: no

DAG enabled: no

Napatech enabled: no

Unix socket enabled: yes

Detection enabled: yes

libnss support: yes

libnspr support: yes

libjansson support: yes

Prelude support: no

PCRE jit: no

LUA support: no

libluajit: no

libgeoip: yes

Non-bundled htp: no

Old barnyard2 support: no

CUDA enabled: no

Suricatasc install: yes

Unit tests enabled: no

Debug output enabled: no

Debug validation enabled: no

Profiling enabled: no

Profiling locks enabled: no

Coccinelle / spatch: no

Generic build parameters:

Installation prefix (--prefix): /usr/local

Configuration directory (--sysconfdir): /usr/local/etc/suricata/

Log directory (--localstatedir) : /usr/local/var/log/suricata/

Host: x86_64-unknown-linux-gnu

GCC binary: gcc

GCC Protect enabled: no

GCC march native enabled: yes

GCC Profile enabled: no

In suricata .yaml

#max-pending-packets: 1024

max-pending-packets: 65534

...

# Runmode the engine should use. Please check --list-runmodes to get the available

# runmodes for each packet acquisition method. Defaults to "autofp" (auto flow pinned

# load balancing).

#runmode: autofp

runmode: workers

.....

.....

af-packet:

- interface: eth2

# Number of receive threads (>1 will enable experimental flow pinned

# runmode)

threads: 4

# Default clusterid. AF_PACKET will load balance packets based on flow.

# All threads/processes that will participate need to have the same

# clusterid.

cluster-id: 98

# Default AF_PACKET cluster type. AF_PACKET can load balance per flow or per hash.

# This is only supported for Linux kernel > 3.1

# possible value are:

# * cluster_round_robin: round robin load balancing

# * cluster_flow: all packets of a given flow are send to the same socket

# * cluster_cpu: all packets treated in kernel by a CPU are send to the same socket

cluster-type: cluster_cpu

# In some fragmentation case, the hash can not be computed. If "defrag" is set

# to yes, the kernel will do the needed defragmentation before sending the packets.

defrag: yes

# To use the ring feature of AF_PACKET, set 'use-mmap' to yes

use-mmap: yes

# Ring size will be computed with respect to max_pending_packets and number

# of threads. You can set manually the ring size in number of packets by setting

# the following value. If you are using flow cluster-type and have really network

# intensive single-flow you could want to set the ring-size independantly of the number

# of threads:

ring-size: 200000

# On busy system, this could help to set it to yes to recover from a packet drop

# phase. This will result in some packets (at max a ring flush) being non treated.

#use-emergency-flush: yes

# recv buffer size, increase value could improve performance

# buffer-size: 100000

# Set to yes to disable promiscuous mode

# disable-promisc: no

# Choose checksum verification mode for the interface. At the moment

# of the capture, some packets may be with an invalid checksum due to

# offloading to the network card of the checksum computation.

# Possible values are:

# - kernel: use indication sent by kernel for each packet (default)

# - yes: checksum validation is forced

# - no: checksum validation is disabled

# - auto: suricata uses a statistical approach to detect when

# checksum off-loading is used.

# Warning: 'checksum-validation' must be set to yes to have any validation

#checksum-checks: kernel

# BPF filter to apply to this interface. The pcap filter syntax apply here.

#bpf-filter: port 80 or udp

....

....

detect-engine:

- profile: high

- custom-values:

toclient-src-groups: 2

toclient-dst-groups: 2

toclient-sp-groups: 2

toclient-dp-groups: 3

toserver-src-groups: 2

toserver-dst-groups: 4

toserver-sp-groups: 2

toserver-dp-groups: 25

- sgh-mpm-context: auto

...

flow-timeouts:

default:

new: 5 #30

established: 30 #300

closed: 0

emergency-new: 1 #10

emergency-established: 2 #100

emergency-closed: 0

tcp:

new: 5 #60

established: 60 # 3600

closed: 1 #30

emergency-new: 1 # 10

emergency-established: 5 # 300

emergency-closed: 0 #20

udp:

new: 5 #30

established: 60 # 300

emergency-new: 5 #10

emergency-established: 5 # 100

icmp:

new: 5 #30

established: 60 # 300

emergency-new: 5 #10

emergency-established: 5 # 100

....

....

stream:

memcap: 4gb

checksum-validation: no # reject wrong csums

midstream: false

prealloc-sessions: 50000

inline: no # auto will use inline mode in IPS mode, yes or no set it statically

reassembly:

memcap: 8gb

depth: 12mb # reassemble 1mb into a stream

toserver-chunk-size: 2560

toclient-chunk-size: 2560

randomize-chunk-size: yes

#randomize-chunk-range: 10

...

...

default-rule-path: /etc/suricata/et-config/

rule-files:

- trojan.rules

- malware.rules

- local.rules

- activex.rules

- attack_response.rules

- botcc.rules

- chat.rules

- ciarmy.rules

- compromised.rules

- current_events.rules

- dos.rules

- dshield.rules

- exploit.rules

- ftp.rules

- games.rules

- icmp_info.rules

- icmp.rules

- imap.rules

- inappropriate.rules

- info.rules

- misc.rules

- mobile_malware.rules ##

- netbios.rules

- p2p.rules

- policy.rules

- pop3.rules

- rbn-malvertisers.rules

- rbn.rules

- rpc.rules

- scada.rules

- scada_special.rules

- scan.rules

- shellcode.rules

- smtp.rules

- snmp.rules

...

....

libhtp:

default-config:

personality: IDS

# Can be specified in kb, mb, gb. Just a number indicates

# it's in bytes.

request-body-limit: 12mb

response-body-limit: 12mb

# inspection limits

request-body-minimal-inspect-size: 32kb

request-body-inspect-window: 4kb

response-body-minimal-inspect-size: 32kb

response-body-inspect-window: 4kb

# decoding

double-decode-path: no

double-decode-query: no

Create the BFP file (you can put it anywhere)

touch /home/pmanev/test/bpf-filter

The bpf-filter should look like this:

root@snif01:/var/log/suricata# cat /home/pmanev/test/bpf-filter

(

(ip and port 20 or 21 or 22 or 25 or 110 or 161 or 443 or 445 or 587 or 6667)

or ( ip and tcp dst port 80 or (ip and tcp src port 80 and

(tcp[tcpflags] & (tcp-syn|tcp-fin) != 0 or

tcp[((tcp[12:1] & 0xf0) >> 2):4] = 0x48545450))))

or

((vlan and port 20 or 21 or 22 or 25 or 110 or 161 or 443 or 445 or 587 or 6667)

or ( vlan and tcp dst port 80 or (vlan and tcp src port 80 and

(tcp[tcpflags] & (tcp-syn|tcp-fin) != 0 or

tcp[((tcp[12:1] & 0xf0) >> 2):4] = 0x48545450)))

)

root@snif01:/var/log/suricata#

Start Suricata like this:

suricata -c /etc/suricata/peter-yaml/suricata-afpacket.yaml --af-packet=eth2 -D -v -F /home/pmanev/test/bpf-filter

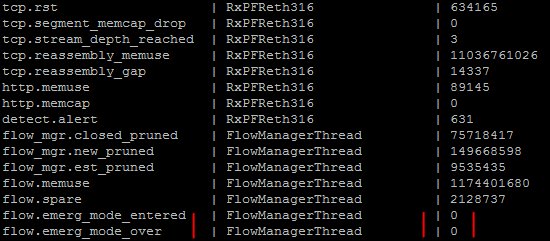

Like this I was able to achieve inspection on 3,2-4Gbps with about 20K rules with 1% drops.

In the suricata.log:

root@snif01:/var/log/suricata# more suricata.log

[1274] 21/6/2014 -- 19:36:35 - (suricata.c:1034) <Notice> (SCPrintVersion) -- This is Suricata version 2.0dev (rev 896b614)

[1274] 21/6/2014 -- 19:36:35 - (util-cpu.c:170) <Info> (UtilCpuPrintSummary) -- CPUs/cores online: 4

......

[1275] 21/6/2014 -- 19:36:46 - (detect.c:452) <Info> (SigLoadSignatures) -- 46 rule files processed. 20591 rules successfully loaded, 8 rules failed

[1275] 21/6/2014 -- 19:36:47 - (detect.c:2591) <Info> (SigAddressPrepareStage1) -- 20599 signatures processed. 827 are IP-only rules, 6510 are inspecting packet payload, 15650 inspect ap

plication layer, 0 are decoder event only

.....

.....

[1275] 21/6/2014 -- 19:37:17 - (runmode-af-packet.c:150) <Info> (ParseAFPConfig) -- Going to use command-line provided bpf filter '( (ip and port 20 or 21 or 22 or 25 or 110 or 161 or 44

3 or 445 or 587 or 6667) or ( ip and tcp dst port 80 or (ip and tcp src port 80 and (tcp[tcpflags] & (tcp-syn|tcp-fin) != 0 or tcp[((tcp[12:1] & 0xf0) >> 2):4] = 0x48545450)))) or ((vl

an and port 20 or 21 or 22 or 25 or 110 or 161 or 443 or 445 or 587 or 6667) or ( vlan and tcp dst port 80 or (vlan and tcp src port 80 and (tcp[tcpflags] & (tcp-syn|tcp-fin) != 0 or

tcp[((tcp[12:1] & 0xf0) >> 2):4] = 0x48545450))) ) '

.....

.....

[1275] 22/6/2014 -- 01:45:34 - (stream.c:182) <Info> (StreamMsgQueuesDeinit) -- TCP segment chunk pool had a peak use of 6674 chunks, more than the prealloc setting of 250

[1275] 22/6/2014 -- 01:45:34 - (host.c:245) <Info> (HostPrintStats) -- host memory usage: 825856 bytes, maximum: 16777216

[1275] 22/6/2014 -- 01:45:35 - (detect.c:3890) <Info> (SigAddressCleanupStage1) -- cleaning up signature grouping structure... complete

[1275] 22/6/2014 -- 01:45:35 - (util-device.c:190) <Notice> (LiveDeviceListClean) -- Stats for 'eth2': pkts: 2820563520, drop: 244696588 (8.68%), invalid chksum: 0

That gave me about 9% drops...... I further adjusted the filter (after realizing I could drop 445 Windows Shares for the moment from inspection).

The new filter was like so:

root@snif01:/var/log/suricata# cat /home/pmanev/test/bpf-filter

(

(ip and port 20 or 21 or 22 or 25 or 110 or 161 or 443 or 587 or 6667)

or ( ip and tcp dst port 80 or (ip and tcp src port 80 and

(tcp[tcpflags] & (tcp-syn|tcp-fin) != 0 or

tcp[((tcp[12:1] & 0xf0) >> 2):4] = 0x48545450))))

or

((vlan and port 20 or 21 or 22 or 25 or 110 or 161 or 443 or 587 or 6667)

or ( vlan and tcp dst port 80 or (vlan and tcp src port 80 and

(tcp[tcpflags] & (tcp-syn|tcp-fin) != 0 or

tcp[((tcp[12:1] & 0xf0) >> 2):4] = 0x48545450)))

)

root@snif01:/var/log/suricata#

Notice - I removed port 445.

So with that filter I was able to do 0.95% drops with 20K rules:

[16494] 22/6/2014 -- 10:13:10 - (suricata.c:1034) <Notice> (SCPrintVersion) --

This is Suricata version 2.0dev (rev 896b614)

[16494] 22/6/2014 -- 10:13:10 - (util-cpu.c:170) <Info> (UtilCpuPrintSummary) -- CPUs/cores online: 4

...

...

[16495] 22/6/2014 -- 10:13:20 - (detect.c:452) <Info> (SigLoadSignatures) -- 46 rule files processed. 20591 rules successfully loaded, 8 rules failed

[16495] 22/6/2014 -- 10:13:21 - (detect.c:2591) <Info> (SigAddressPrepareStage1) --

20599 signatures processed. 827 are IP-only rules, 6510 are inspecting packet payload, 15650 inspect application layer, 0 are decoder event only

...

...

[16495] 23/6/2014 -- 01:45:32 - (host.c:245) <Info> (HostPrintStats) -- host memory usage: 1035520 bytes, maximum: 16777216

[16495] 23/6/2014 -- 01:45:32 - (detect.c:3890) <Info> (SigAddressCleanupStage1) -- cleaning up signature grouping structure... complete

[16495] 23/6/2014 -- 01:45:32 - (util-device.c:190) <Notice> (LiveDeviceListClean) -- Stats for 'eth2':

pkts: 6550734692, drop: 62158315 (0.95%), invalid chksum: 0

So with that BPF filter we have ->

Pros

I was able to inspect with a lot of rules(20K) a lot of traffic (4Gbps peak) with an undersized and minimal HW (4 CPU 16GB RAM ) sustained with less then 1% drops

Cons

- Not inspecting DNS

- Making an assumption that all HTTP traffic is using port 80. (Though in my case 99.9% of the http traffic was on port 80)

- This is an advanced BPF filter , requires a good chunk of knowledge in order to understand/implement/re-edit

Simple and efficient

In the case where you have a network or a device that generates a lot of false positives and you are sure you can disregard any traffic from that device - you could do a filter like this:

(ip and not host 1.1.1.1 ) or (vlan and not host 1.1.1.1)

for a VLAN and non VLAN traffic mixed. If you are sure there is no VLAN traffic you could just do that:

ip and not host 1.1.1.1

Then you can simply start Suricata like so:

suricata -c /etc/suricata/peter-yaml/suricata-afpacket.yaml --af-packet=eth2 -D -v \(ip and not host 1.1.1.1 \) or \(vlan and not host 1.1.1.1\)

or like this respectively (to the two examples above):

suricata -c /etc/suricata/peter-yaml/suricata-afpacket.yaml --af-packet=eth2 -D -v ip and not host 1.1.1.1